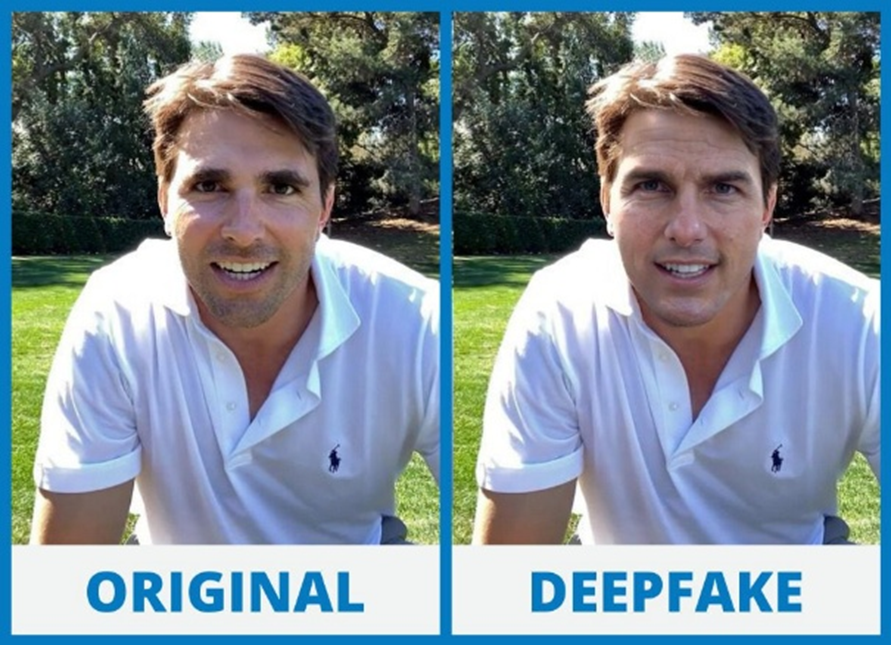

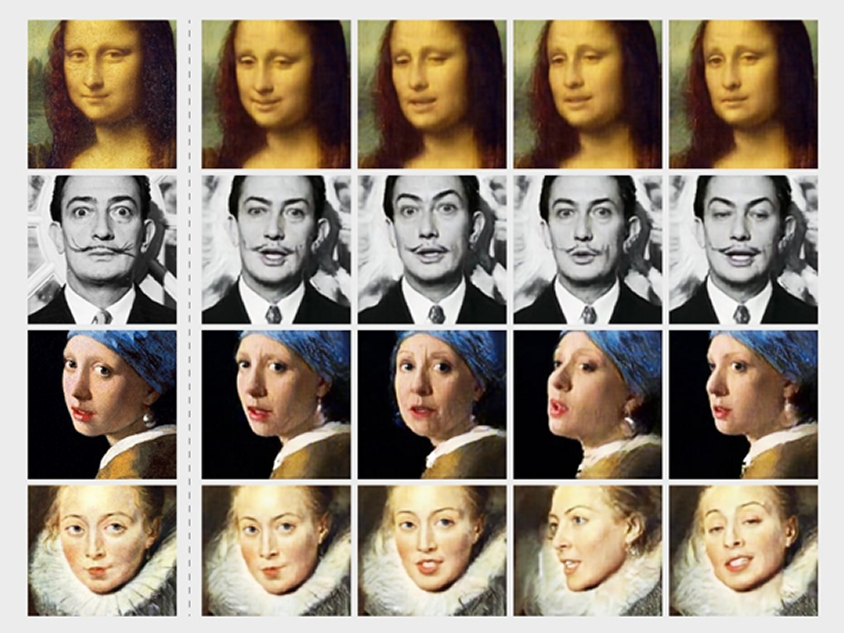

From news-filtering algorithms to virtual assistants recommending articles, AI has the power to shape our perception of reality. However, it has also been used as a tool for creating and amplifying misinformation. This is where UX design plays a fundamental role.

A Scientific American publication explains that people do not intend to share false information—they simply don’t know how to detect it:

“Our research finds that most people do not wish to share inaccurate information (in fact, over 80 percent of respondents felt that it’s very important to only share accurate content online) and that, in many cases, people are fairly good (overall) at distinguishing legitimate news from false and misleading (hyperpartisan) news. Research we’ve conducted consistently shows that it’s not partisan motivations that lead people to fail to distinguish between true and false news content, but rather simple old lazy thinking. People fall for fake news when they rely on their intuitions and emotions, and therefore don’t think enough about what they are reading—a problem that is likely exacerbated on social media, where people scroll quickly, are distracted by a deluge of information, and encounter news mixed in with emotionally engaging baby photos, cat videos and the like.”

In UX, designers are responsible for aesthetics, functionality, transparency, and ethics in user experiences.

UX designers can enhance transparency by creating interfaces that clearly explain how content is selected. This could include messages or alerts indicating whether an algorithm has prioritized content based on previous interests or user behaviour patterns. Providing users with options to modify content display preferences—such as selecting trusted sources or adjusting algorithm settings—would restore some control to users and reduce the hidden influence of algorithms.

Designers must be aware of the impact of recommendation systems. Explaining why certain content is suggested can increase user trust. Instead of obscuring how algorithms work, designers can implement visual elements or quick-access information to help users understand why a particular post appears in their feed. For example, adding a small note stating, “Recommended content based on your interest in...” can significantly enhance credibility.

Trust in digital platforms grows when design prioritizes ethics and transparency, allowing users to understand and control the information they consume. Designers must create visual experiences that avoid manipulation—steering clear of overly edited images or sensationalized data presentations. Applying design principles that emphasize authenticity and clarity is crucial for building trust.

Transparency also plays a key role in user confidence. When platforms clearly explain how their algorithms work and how data is collected, users feel more informed and in control. Integrating tools that reveal the origins of recommendations or the logic behind content curation strengthens credibility and improves the digital experience as a whole.

This video highlights the efforts of organizations working to improve user trust in online news transparency:

AI can be used both to create fake news and to combat it. The outcome depends on how its algorithms are designed and the ethics behind their implementation. As UX designers, we have the responsibility to create experiences that promote transparency and truthfulness, ensuring that AI becomes an ally in the fight against misinformation.

What do you think? How can we improve the relationship between AI and UX to prevent the spread of fake news? Let me know in the comments!